Since the goal of machine learning is to give machines human-like intelligence, why not try to build a model that’s structured like the human brain?

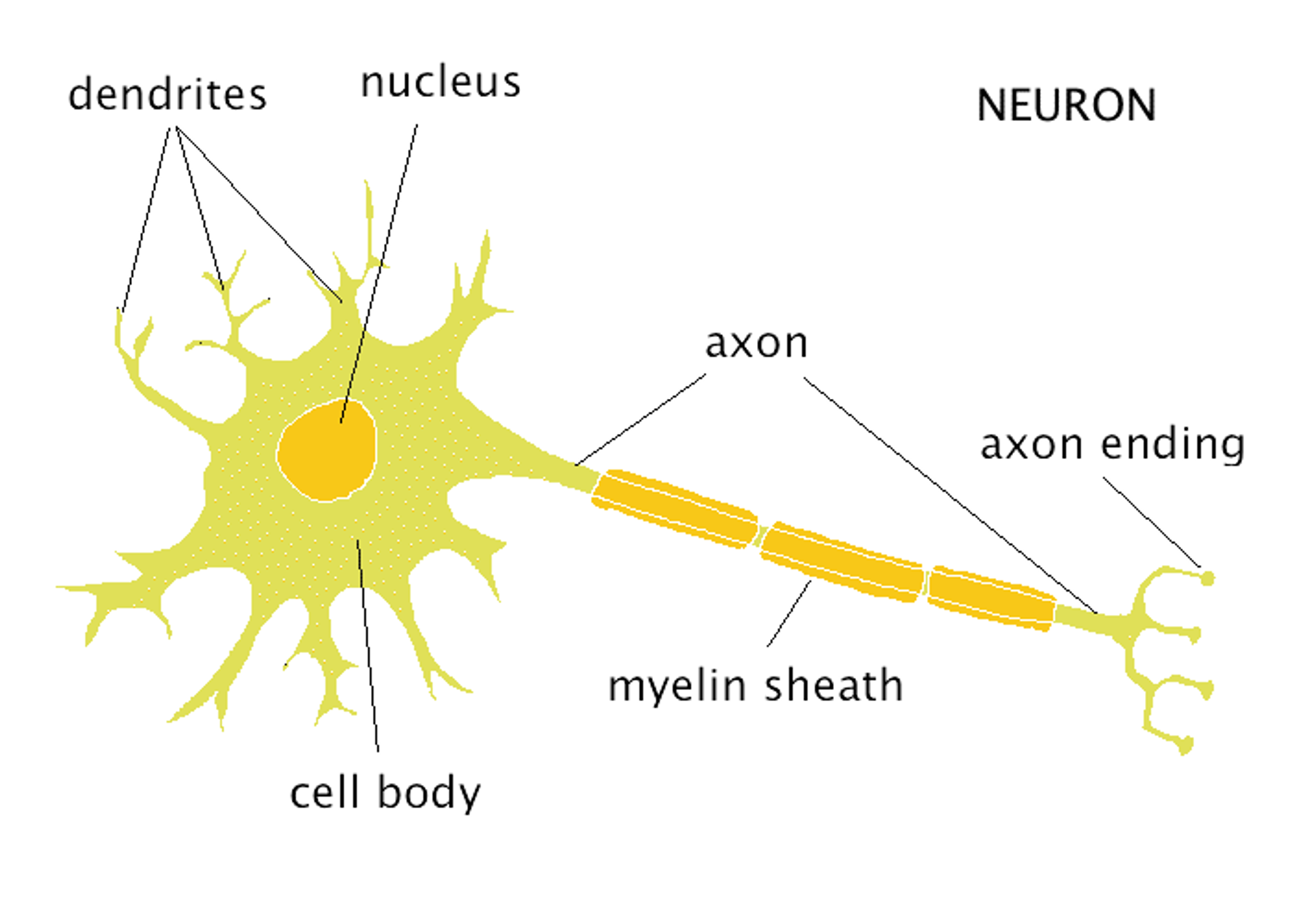

The Brain

At the most basic level, the brain is a collection of neurons, which consist of dendrites and axons. Each neuron is responsible for collecting signals through dendrites, processing the information it receives, and outputting what it has processed through in the form of an electric signal through its axon.

While one neuron won’t do much, linking many of them together into a network structure allows for a lot more complexity and intelligence. In fact, the human brain has around 86 billion neurons!

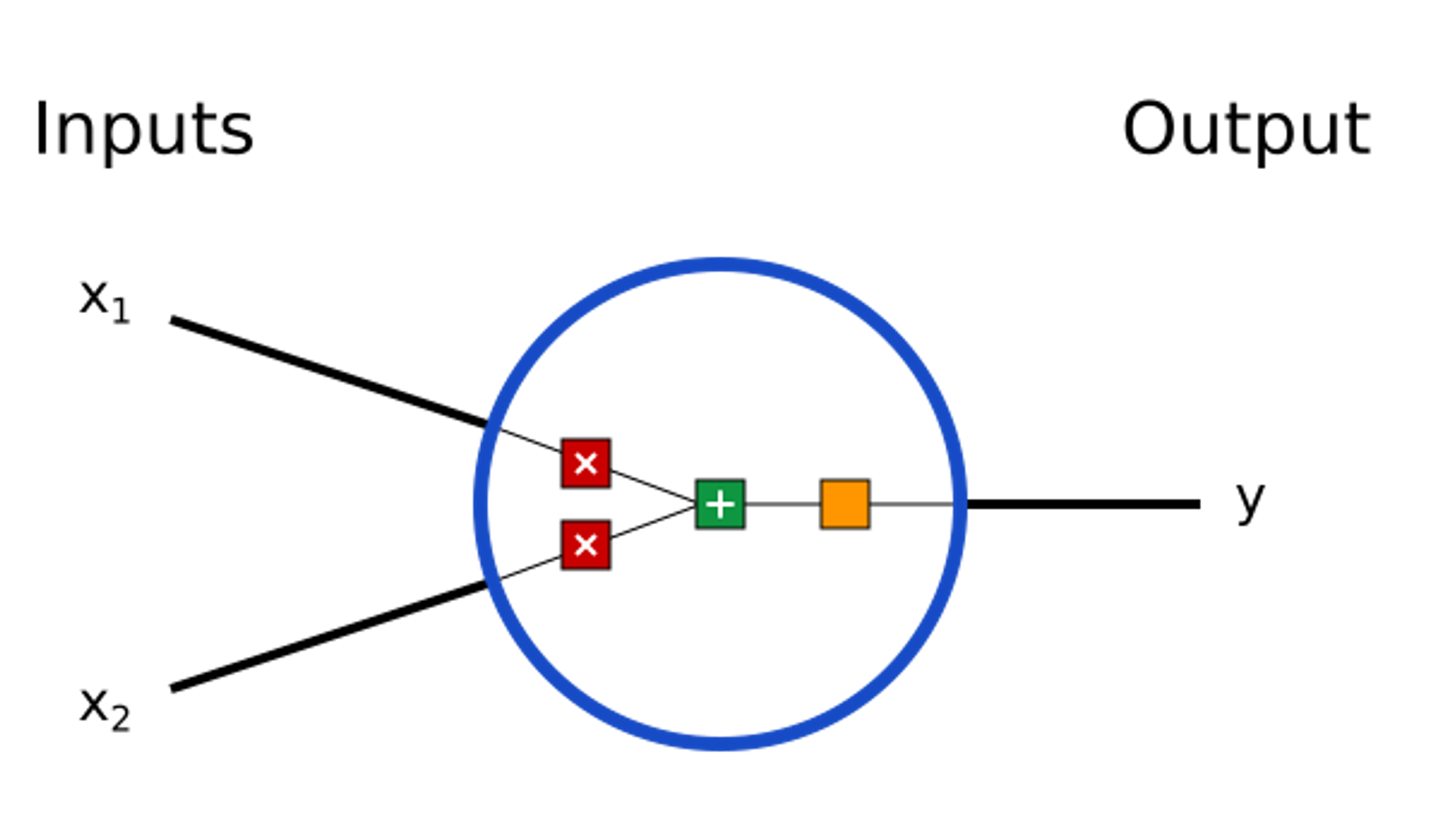

Neurons

Turns out, neural networks are structured almost exactly like the brain, with a collection of neurons that form a network. Here’s what an individual neuron looks like:

We can already notice some similarities with the biological neuron:

- The inputs correspond with dentrites. Both are responsible for taking in some sort of information

- The output corresponds with the axon. Both are responsible for outputting some sort of information.

- Finally, the stuff you see in the middle is what processes all the information, much like the cell body of a neuron.

However, while neuron’s from the brain rely on biological processes and chemicals to transfer and process information, the neurons we’re going to be using for machine learning solely uses math.

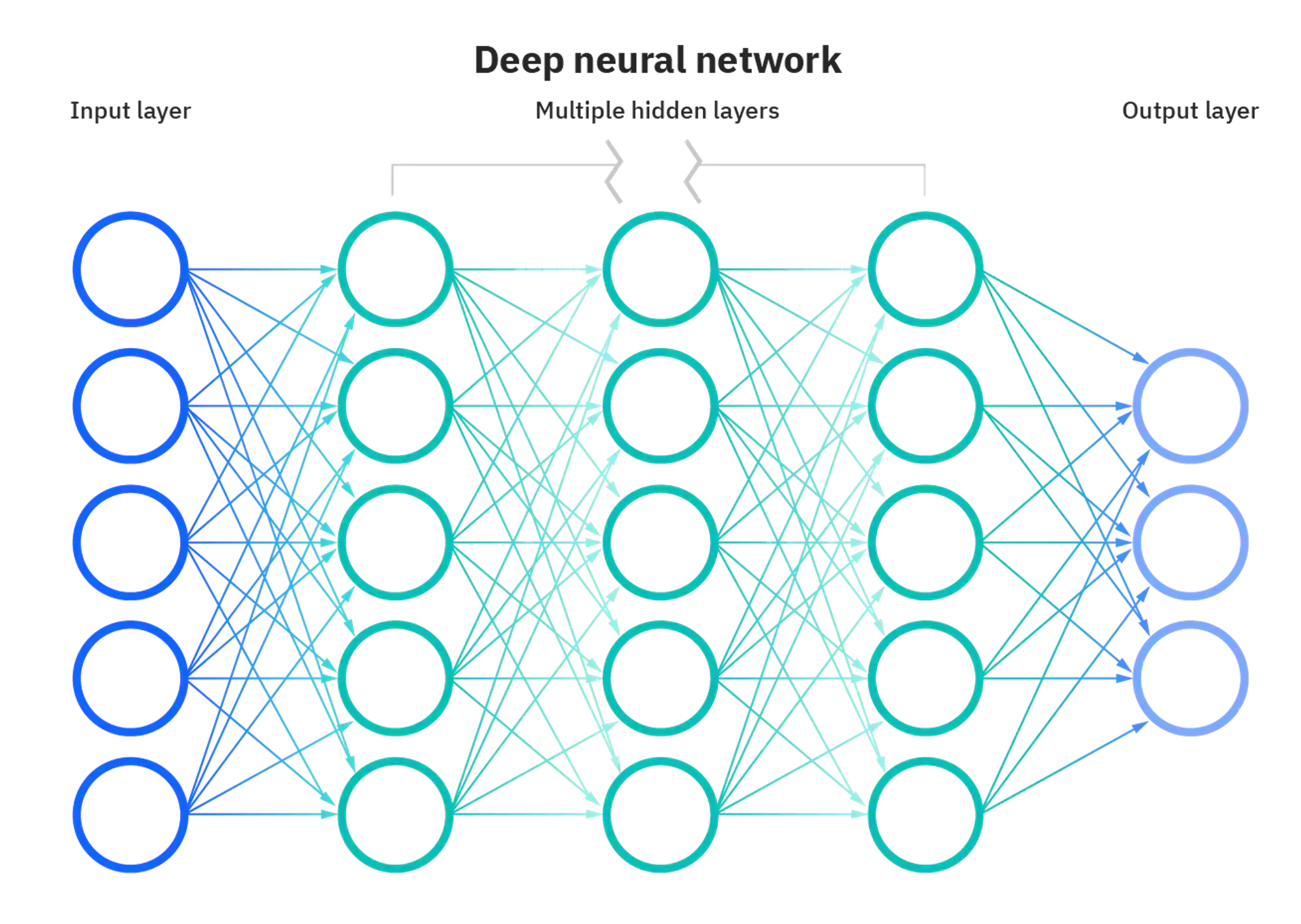

Neural Networks

By connecting many of these neurons together, we create a neural network:

As you can see, each circle in the diagram corresponds to a neuron, each of which collect input and send outputs. Neurons are organized into layers—you can think of the input layer as the input to the neural network model (much like an input into linear regression or a decision tree), hidden layers as what processes the input, and the output layer as the output of the model.

While many of the previous models we looked at typically only have one output, neural networks can have any number of outputs, which can be changed by increasing/decreasing the number of neurons in the output layer.

Next Section

Copyright © 2021 Code 4 Tomorrow. All rights reserved.

The code in this course is licensed under the MIT License.

If you would like to use content from any of our courses, you must obtain our explicit written permission and provide credit. Please contact classes@code4tomorrow.org for inquiries.