Basic Understanding

Convolutional Neural Networks, or CNNs, classify images based on the pixel values within those images, allowing computers to mimic human eyes and brain. It has multiple uses in the real world, from self driving cars to robotics, as it serves as the basis for computer vision applications. In this chapter, we will be using Tensorflow, a machine learning library, to allow computers to see.

Why Convolutional Networks?

In the last chapter, you learned about the basic network structure, but why use a different one for image recognition? CNNs can reduce the picture down through the process below, meaning the training process takes much less time compared to a Dense network, which needs to train on the full picture, taking more time and processing power. In the end, CNNs are better suited for image processing tasks, so it is natural to use them when we need to identify images.

How it Works

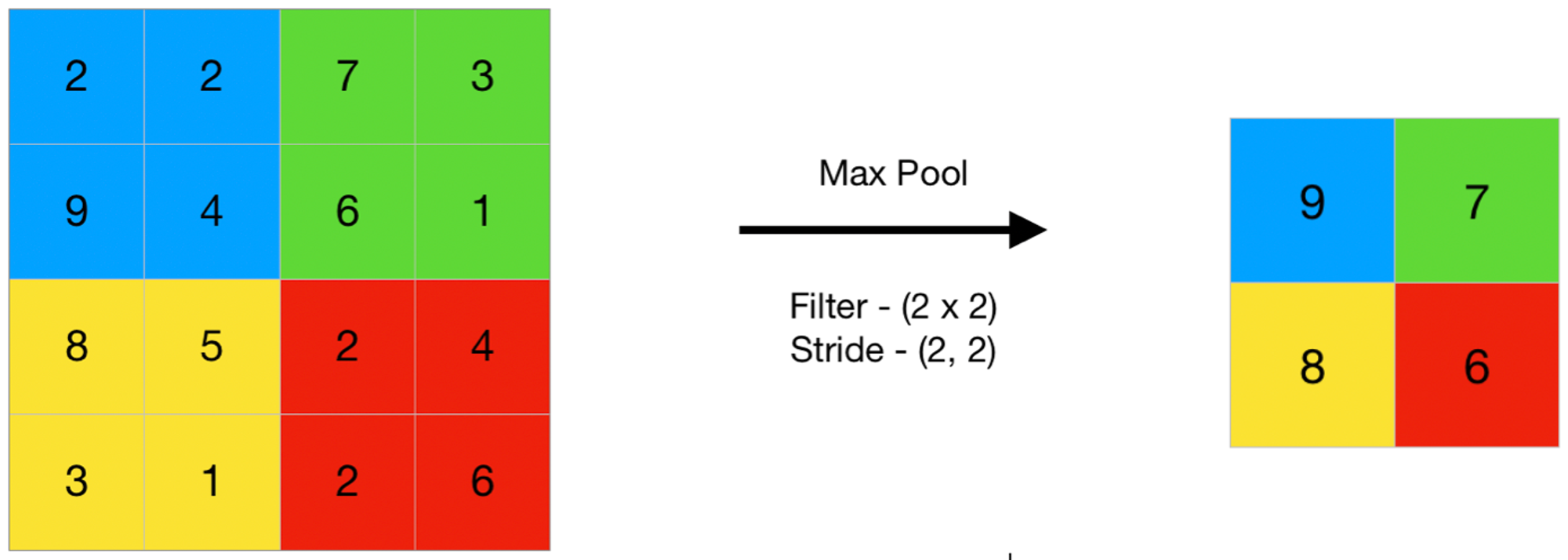

A CNN works by splitting pixel values into squares (the height and width are the ‘pool size’). As the CNN goes through the image, it looks at each pool/patch and, depending on the model, either takes the average or maximum of the pixels in that pool. We do this to reduce the amount of work for our network - by removing all the blank space, or white pixels, we give our neural network only the important pixels in the image. This, in turn, leads to faster computation, and reduces overfitting. Below is an image describing how max pooling works. Apart from Max Pooling, there are other types of pooling, such as Average Pooling, which takes the average of all the pixels, and Minimum Pooling, which takes the lowest pixel.

Next Section

Copyright © 2022 Code 4 Tomorrow. All rights reserved.

The code in this course is licensed under the MIT License.

If you would like to use content from any of our courses, you must obtain our explicit written permission and provide credit. Please contact classes@code4tomorrow.org for inquiries.